- Blog

- 6 A/B Testing Examples From Real Businesses to Inspire You

6 A/B Testing Examples From Real Businesses to Inspire You

-

Barbara Bartucz

- Conversion

- 6 min read

Table of Contents

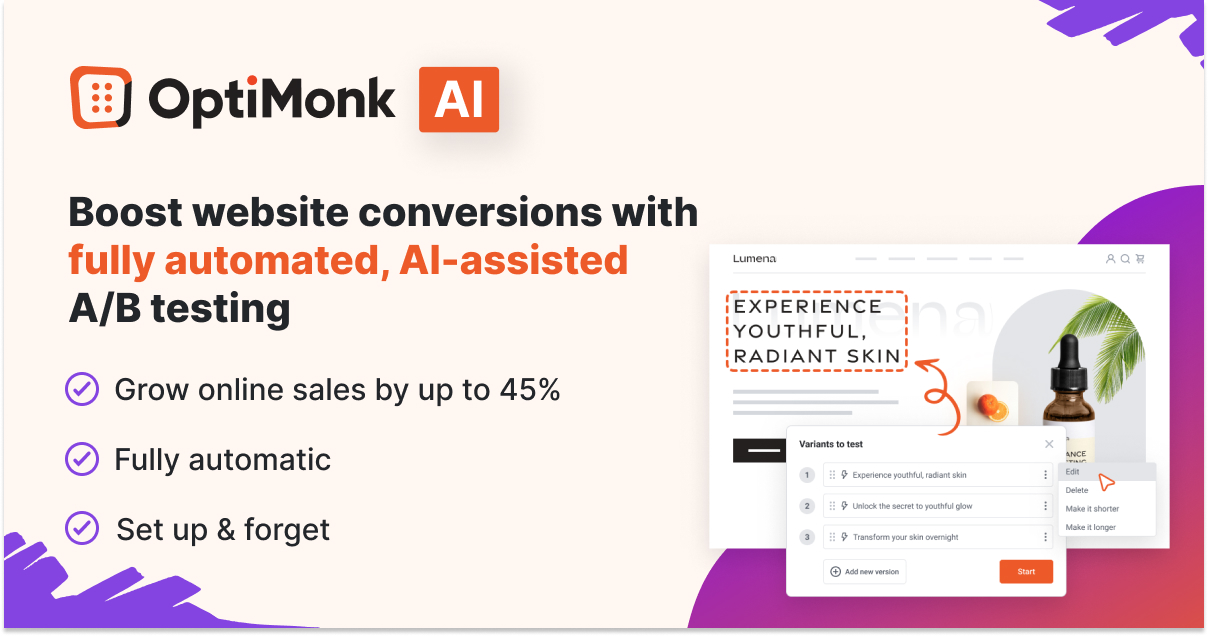

A/B testing is one of the most effective conversion rate optimization tactics in digital marketing. By experimenting with landing page variations, you can maximize your existing website traffic and increase revenue.

However, many ecommerce store owners struggle with where to start in optimizing their landing pages.

If that sounds like you, read on—we’re about to explore six real-life A/B test success stories from top ecommerce brands. These case studies highlight the power of small changes on a landing page and offer actionable insights that can inspire your own split testing strategies.

Let’s jump right in!

A/B testing example #1: Bukvybag

Split testing homepage headlines led to a 45% increase in orders

Bukvybag, a direct-to-consumer store specializing in bags for women, faced challenges with a low conversion rate on their home page.

They were unsure whether their headline was resonating with their target audience.

To address this problem, they used OptiMonk’s Dynamic Content feature and ran a split test on their home page headline. This helped them uncover the headlines that lured in the most customers.

Bukvybag’s original headline was “Versatile bags & accessories.” They wanted to test variations to try to boost their conversion rates.

These were the headlines they experimented with, each of which focused on a different value proposition:

- Variant A: Stand out from the crowd with our fashion-forward and unique bags

- Variant B: Discover the ultimate travel companion that combines style and functionality

- Variant C: Premium quality bags designed for exploration and adventure

To come up with headline ideas, they used OptiMonk’s Smart Headline Generator (powered by AI).

This split test strategy helped Bukvybag achieve statistically significant results and feel confident that their increased conversion rate wasn’t down to random chance.

In fact, the 45% upswing in orders they achieved thanks to A/B testing proved to be sustainable in the long run.

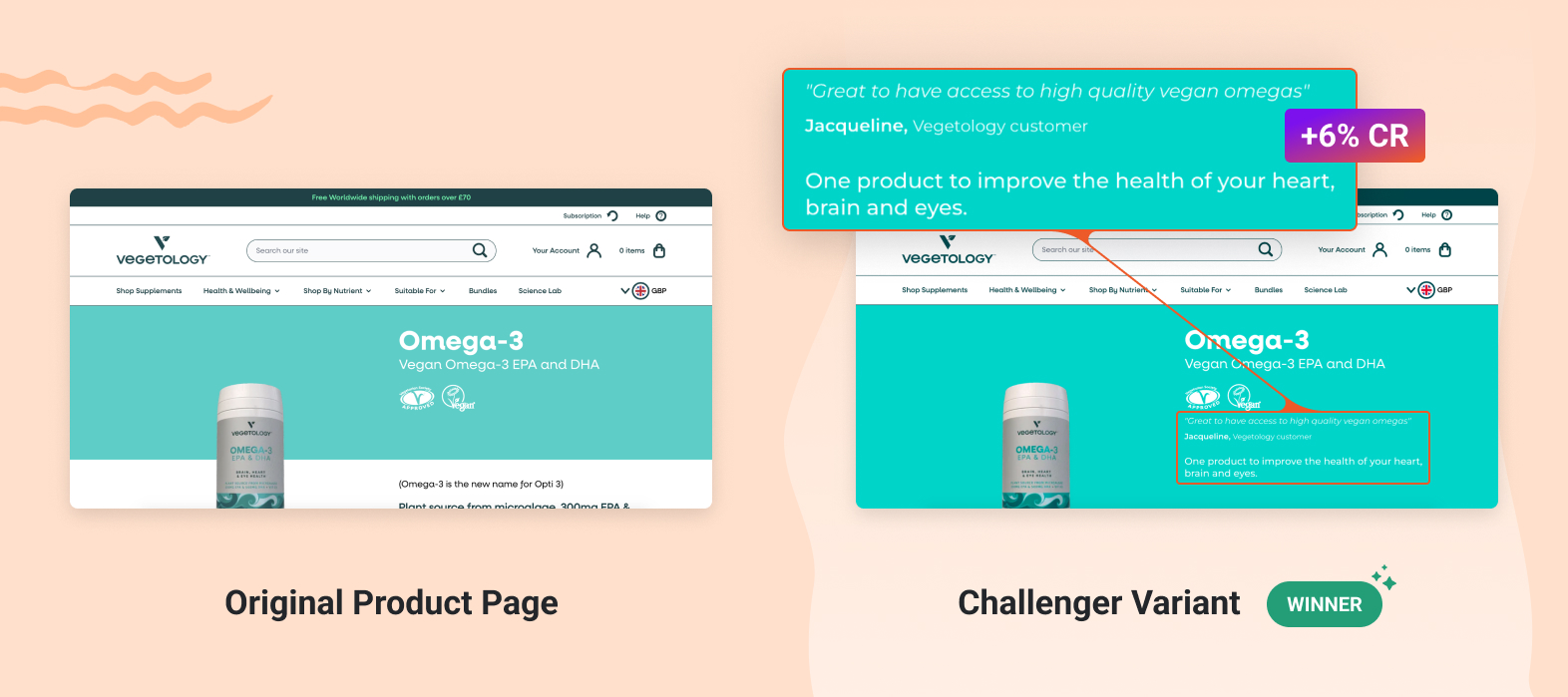

A/B testing example #2: Vegetology

Adding an above-the-fold testimonial to product pages achieved a 10.3% increase in unique purchases

Social proof is a key factor in driving sales, helping first-time website visitors feel confident in the quality of products that an unfamiliar store is offering.

In fact, research has found that 75% of consumers actively seek out reviews and testimonials before making a purchase.

Vegetology’s products have generated many amazing reviews, but they weren’t effectively showcasing them. Their testimonials were buried at the bottom of their product pages, where nobody could see them.

It made sense for Vegetology to consider adding social proof to the above-the-fold section of the existing page, but that’s a big change that could also backfire. In a situation like this, the best thing to do is use A/B testing to collect data and user insights, and find out for sure whether it’s the right move.

They used an OptiMonk Embedded Content campaign to create multiple versions of their product pages. They found that the challenger variant that included the customer testimonials at the top of the page led to a 6% increase in the conversion rate and a 10.3% increase in unique purchases.

Once Vegetology saw those results, they were able to roll out the change permanently and increase revenue over the long term.

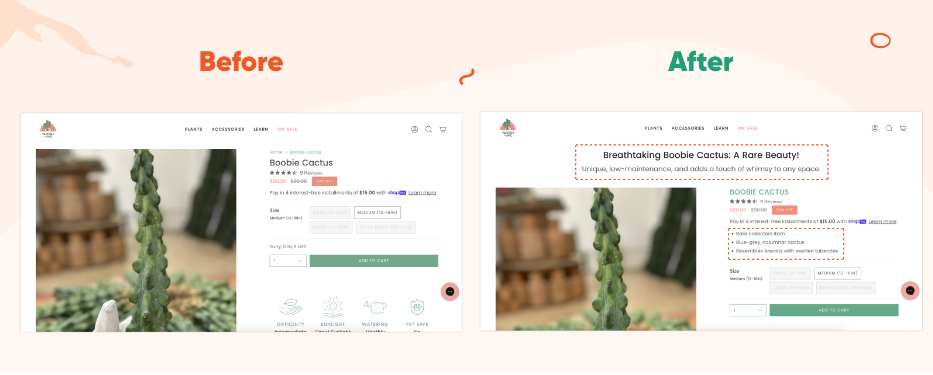

A/B testing example #3: Varnish & Vine

Increased their revenue by 43% after multivariate testing their product pages

Varnish & Vine wanted to optimize their product pages in order to convert more traffic into paying customers. Similar to Vegetology’s customer testimonial split testing method, however, Varnish & Vine wanted to test multiple elements on their landing page at once.

Not to mention that they have more than 70 product pages, which made it difficult for them to optimize all product pages at once.

They used OptiMonk’s Smart Product Page Optimizer to test each of their product pages simultaneously and conduct a multivariate test on a number of elements like their headlines, benefit lists, and calls-to-action.

Unlike a traditional A/B test, they could test multiple page variables instead of just comparing changes to one element.

The AI-powered tool analyzed Varnish & Vine’s product pages and crafted captivating headlines, subheadlines, and lists of benefits for each product page automatically. These additions were designed to resonate with the target audience and boost conversions.

Based on the A/B tests, Varnish & Vine saw that the AI-optimized landing pages resulted in a 12% increase in orders and an impressive 43% increase in revenue.

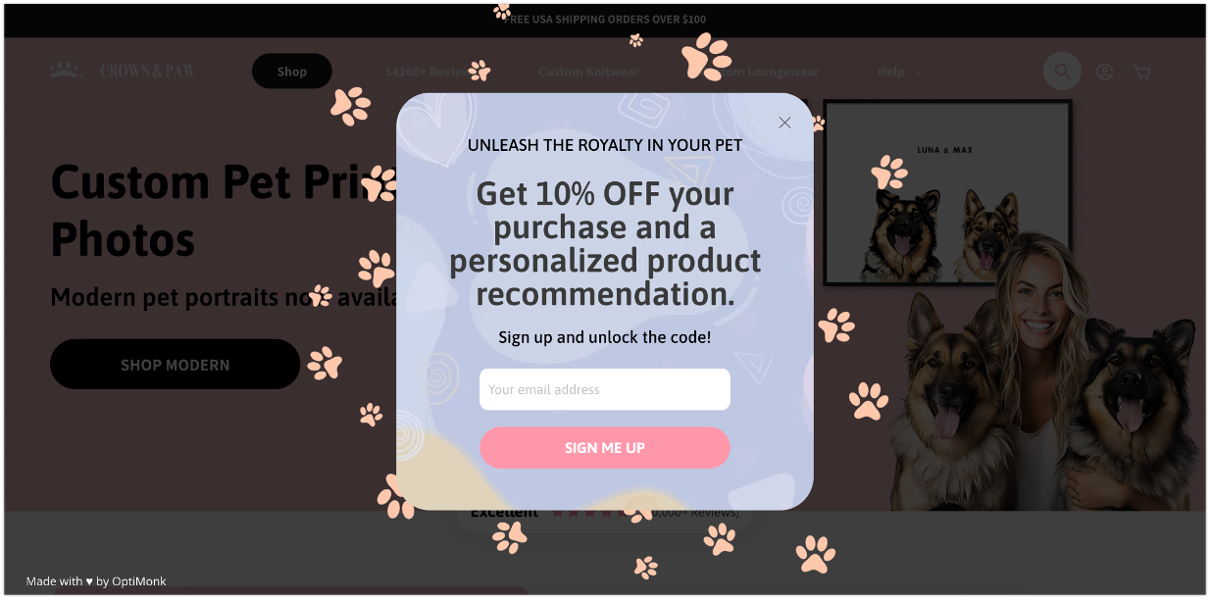

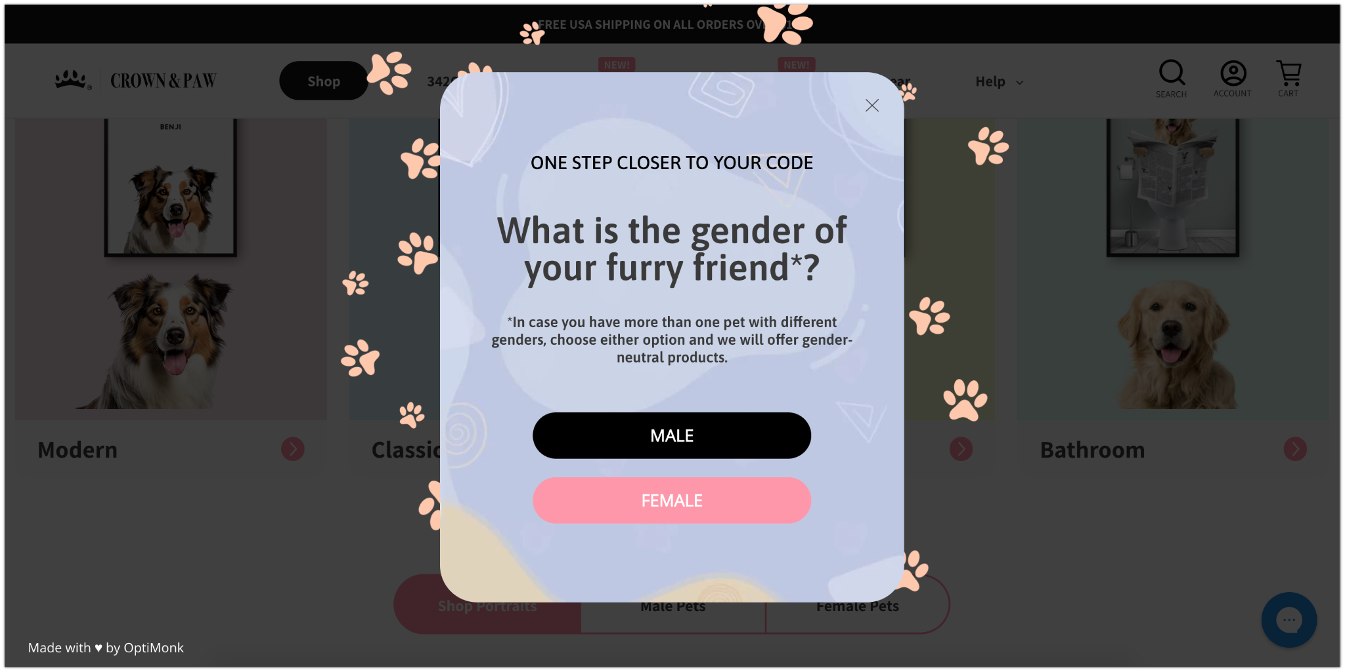

A/B testing example #4: Crown & Paw

Increased their email popup conversion rate by 2.5x

Crown & Paw took a different approach to their marketing strategy. Instead of A/B testing a landing page, they focused on their email list-building efforts.

Initially, they used a simple Klaviyo email popup to generate leads, but it had a poor conversion rate. They decided to try a multi-step popup to collect more customer data and emails from their website visitors.

First, they tempted visitors with a good discount, plus the promise of personalized product recommendations.

Then, they asked a few simple questions to learn about their interests and preferences.

Finally, based on the answers provided by the ~95% of visitors who answered the questions, they recommended relevant products in the third step of the popup. They also displayed the discount code right there.

After A/B testing, they found that the multi-step popup had a conversion rate of 4.03%, which was a 2.5X increase compared to the simple Klaviyo email popup they had been using before.

This highlights the importance of optimizing your email popups as it can significantly boost user engagement, leading to higher conversion rates.

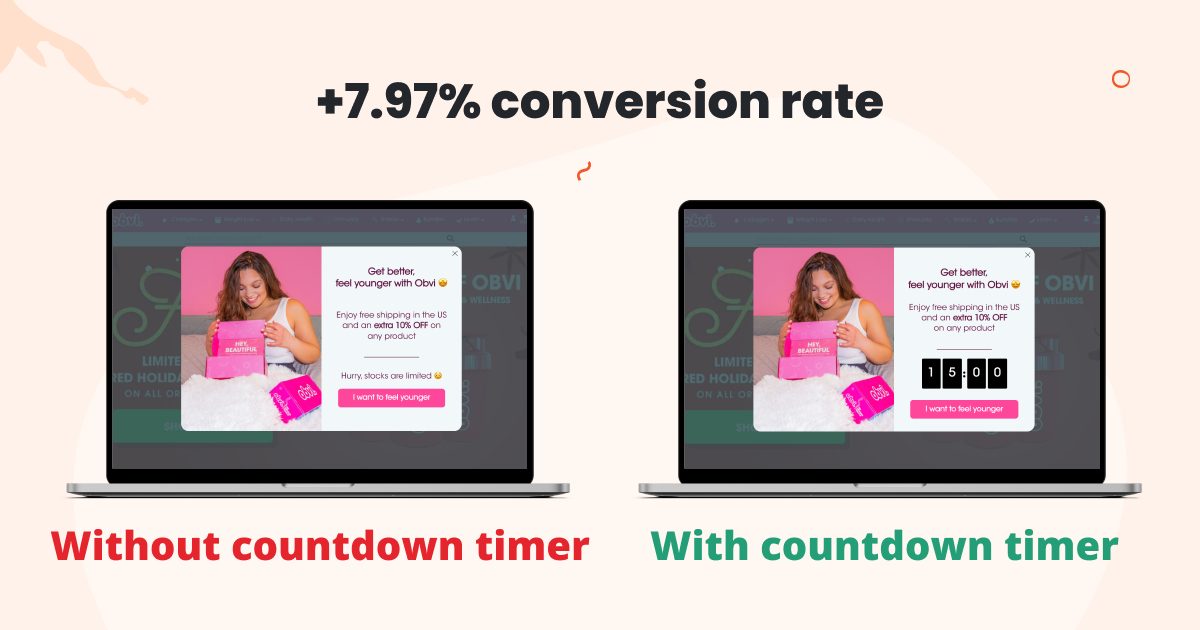

A/B testing example #5: Obvi

Adding a countdown timer increased popup conversion rates by 7.97%

Obvi wanted to run tests to discover whether adding a countdown timer to their discount popup would increase conversion rates.

They hypothesized that the countdown timer would increase the sense of urgency and result in higher coupon redemption rates, boosting revenues.

They created two versions of the discount popup, one with a timer and the other without. The variant with a countdown timer converted 7.97% better than the one without, indicating that the timer was effective at increasing urgency and conversions.

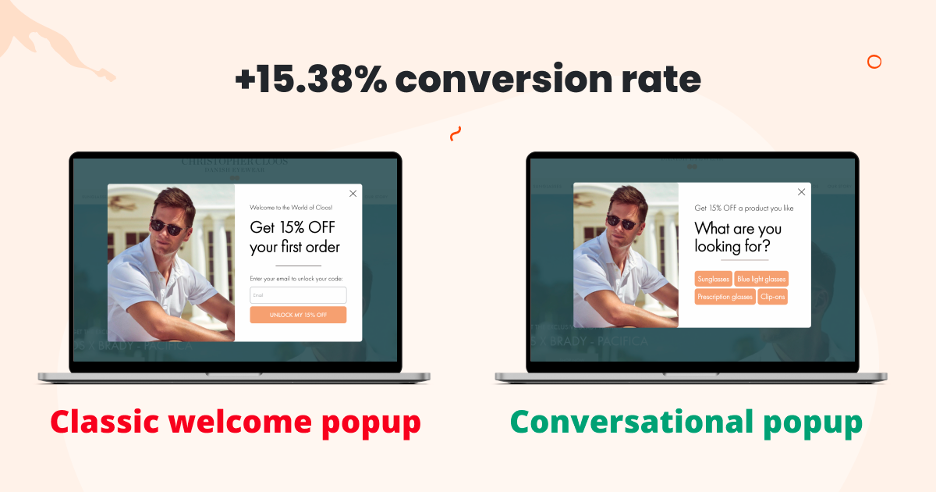

A/B testing example #6: Christopher Cloos

Testing a classic welcome popup against a conversational popup resulted in a 15.38% increase in conversion rates

A/B testing different types of marketing campaigns is a way to discover which type resonates better with your target audience.

This goes beyond testing two versions of the same web page or marketing campaign, and involves trying out completely different approaches.

In this case, the Christopher Cloos team tested a classic welcome popup against a more personalized conversational popup.

After reaching statistical significance by showing the different versions to a large enough sample size, they found that the conversational popup converted at a higher rate (15.38% higher, to be exact).

FAQ

What is A/B testing?

A/B testing, also known as split testing, is a method used in marketing to compare two versions of something to determine which one performs better. It involves creating two variations (A and B) of a webpage, email, ad, or other marketing asset, and then randomly showing these variations to different groups of users. By measuring the performance of each variation, you can identify which version is more effective in achieving its goals.

Why is A/B testing important?

A/B testing is crucial because it allows you to make data-driven decisions rather than relying on assumptions or intuition. By testing different elements such as headlines, images, calls to action, or layout variations, you can improve conversion rates and increase sales. Without A/B testing, you may miss out on opportunities to improve your campaigns and may not fully understand what resonates best with your audience.

How often should you conduct A/B testing?

The frequency of A/B testing depends on various factors, including the size of your audience, the level of traffic to your website or platform, and the rate of change in your industry. In general, it’s advisable to conduct A/B tests regularly to continuously refine your marketing strategies and stay competitive. However, it’s essential to strike a balance between testing frequently enough to make meaningful improvements and not overwhelming your audience with too many changes at once.

What is statistical significance?

Statistical significance is a measure that indicates whether the results of an experiment or study are likely due to a specific factor rather than random chance. It helps determine if the observed effect is reliable. Sample size, effect size, and variability in data can all affect statistical significance. Larger sample sizes and larger effect sizes increase the likelihood of achieving statistically significant results.

Learn more about A/B testing

Curious to dive deeper into A/B testing?

Whether you’re looking to understand the different types of A/B tests, grasp the concept of statistical significance, or learn how to run A/B tests effectively, there’s plenty more to explore.

For a comprehensive overview, including tips on optimizing your conversion rate and achieving better results, check out this insightful video:

This resource will help you master the essentials of A/B testing and apply them confidently to your own digital marketing strategies.

Wrapping up

Each of these examples has shown how controlled experiments can help you improve your home page, landing pages, and marketing campaigns.

The more tests you run, the more confident you can be that your website is performing optimally and making you the most money possible.

Once you have the right A/B testing tool, it’ll be easy to optimize each and every web page on your site.

Looking to make A/B testing effective and effortless? Try Smart A/B testing and automate 95% of your CRO efforts!

Migration has never been easier

We made switching a no-brainer with our free, white-glove onboarding service so you can get started in the blink of an eye.

What should you do next?

Thanks for reading till the end. Here are 4 ways we can help you grow your business:

Boost conversions with proven use cases

Explore our Use Case Library, filled with actionable personalization examples and step-by-step guides to unlock your website's full potential. Check out Use Case Library

Create a free OptiMonk account

Create a free OptiMonk account and easily get started with popups and conversion rate optimization. Get OptiMonk free

Get advice from a CRO expert

Schedule a personalized discovery call with one of our experts to explore how OptiMonk can help you grow your business. Book a demo

Join our weekly newsletter

Real CRO insights & marketing tips. No fluff. Straight to your inbox. Subscribe now

Barbara Bartucz

- Posted in

- Conversion

Partner with us

- © OptiMonk. All rights reserved!

- Terms of Use

- Privacy Policy

- Cookie Policy

Product updates: January Release 2025